Emergency Evacuation App

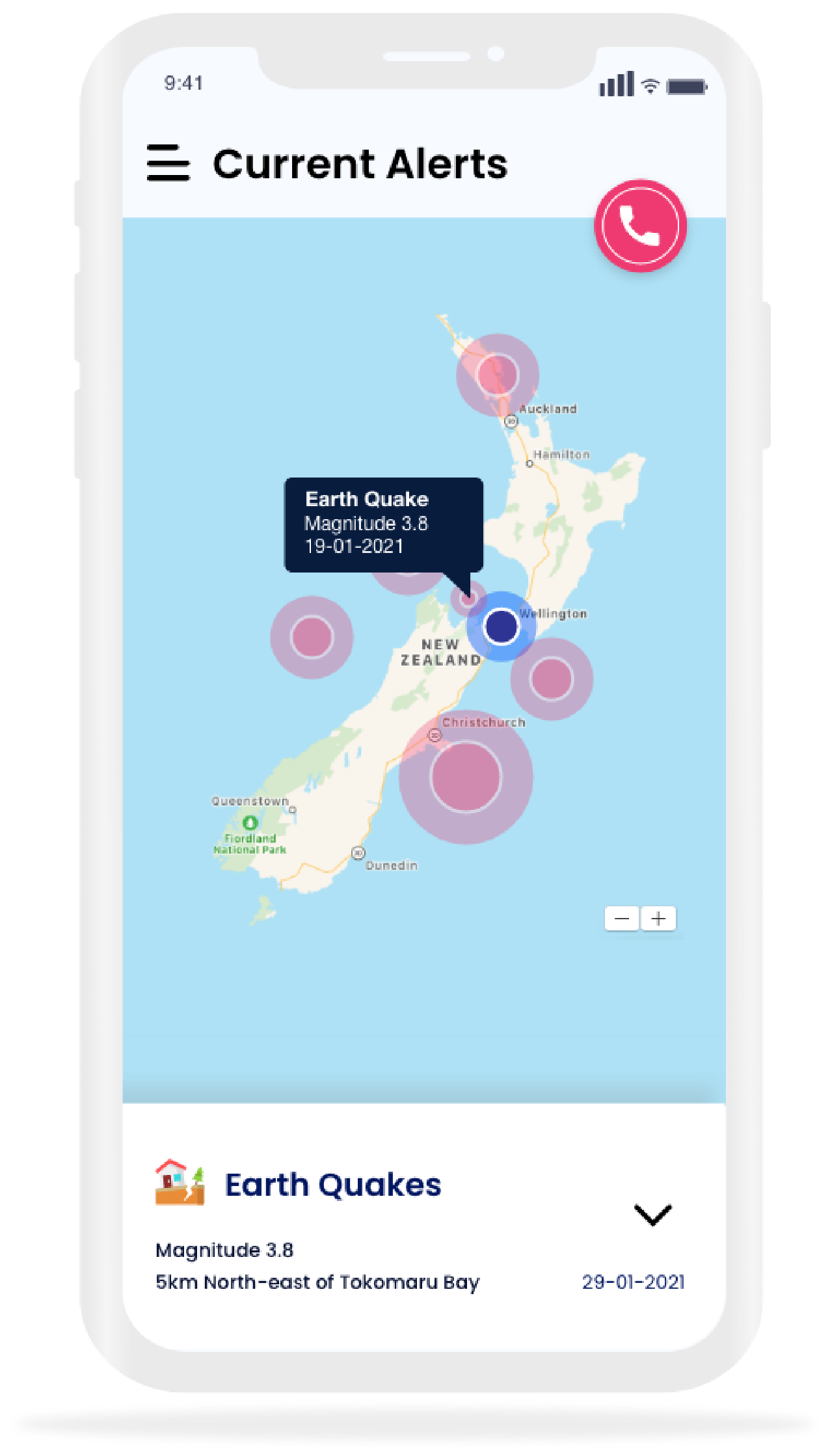

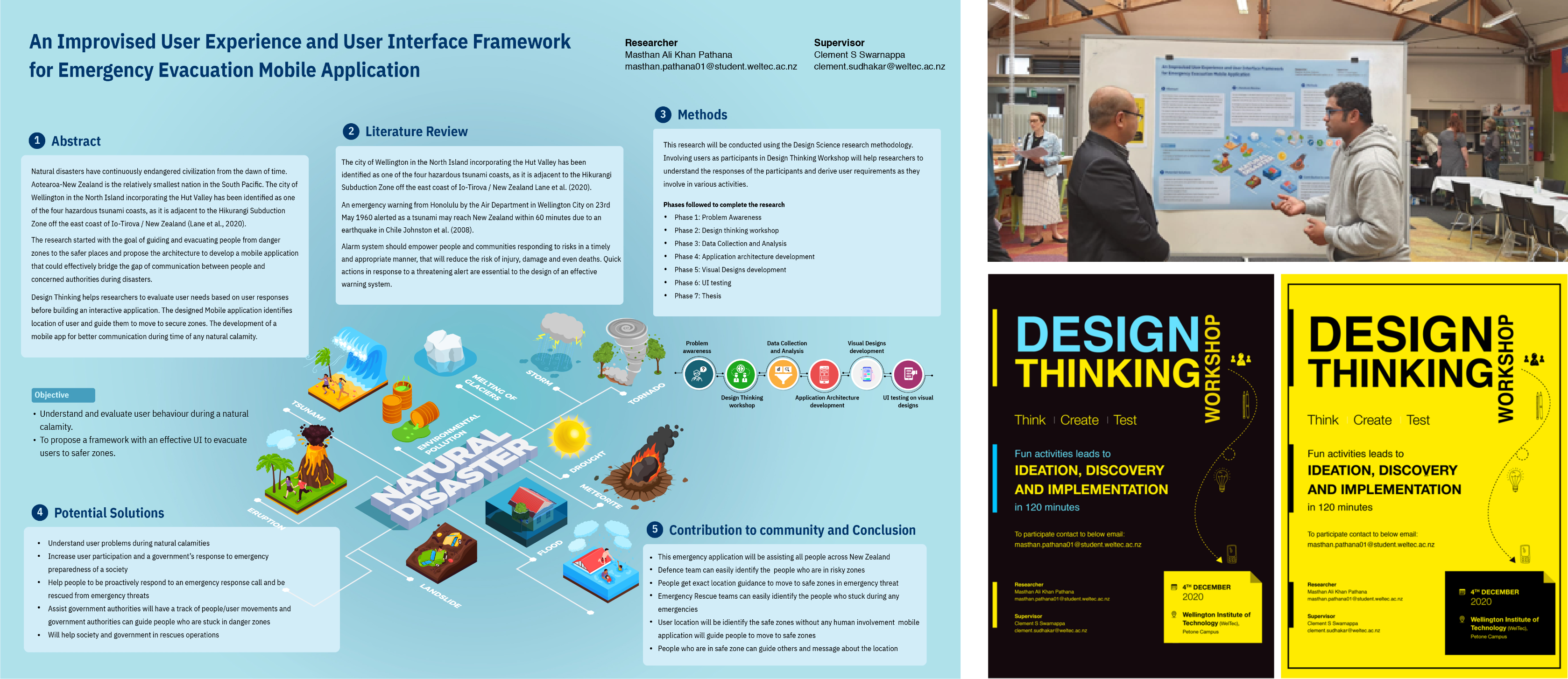

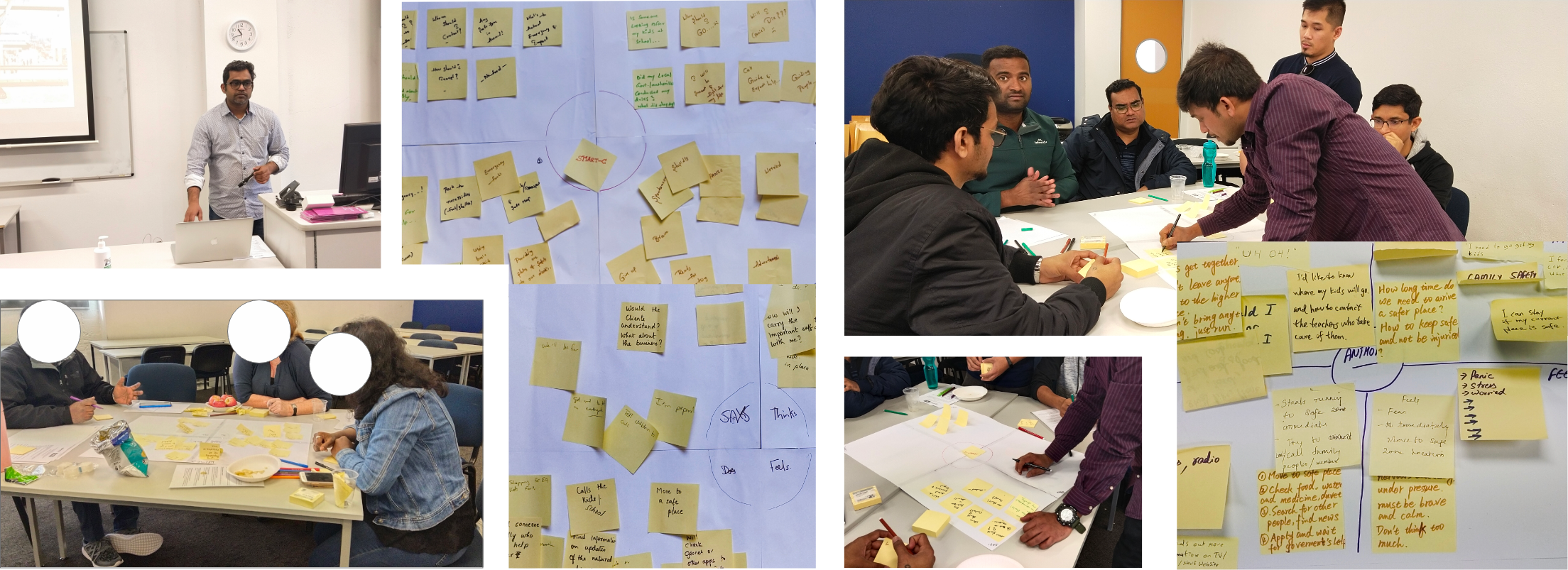

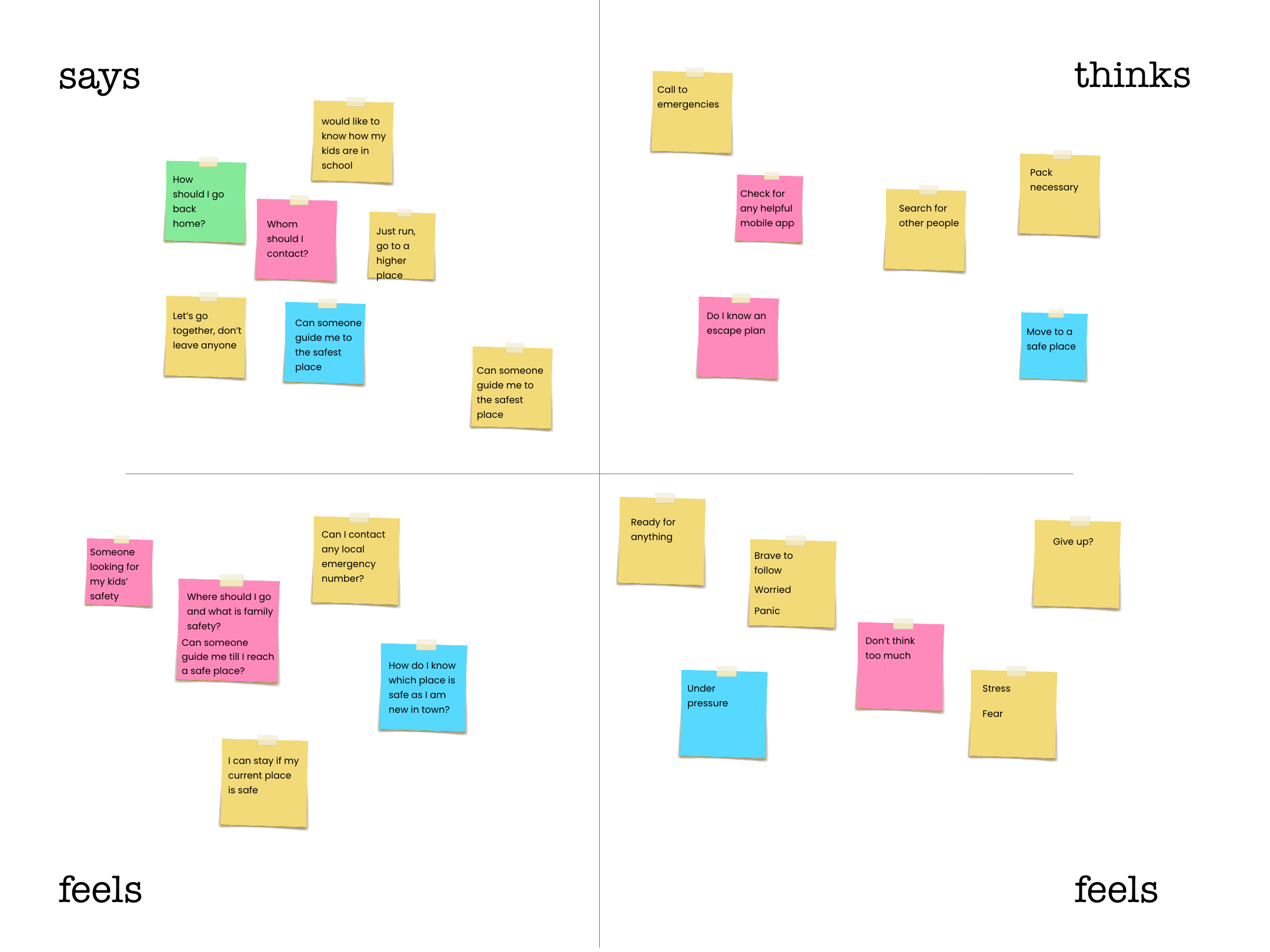

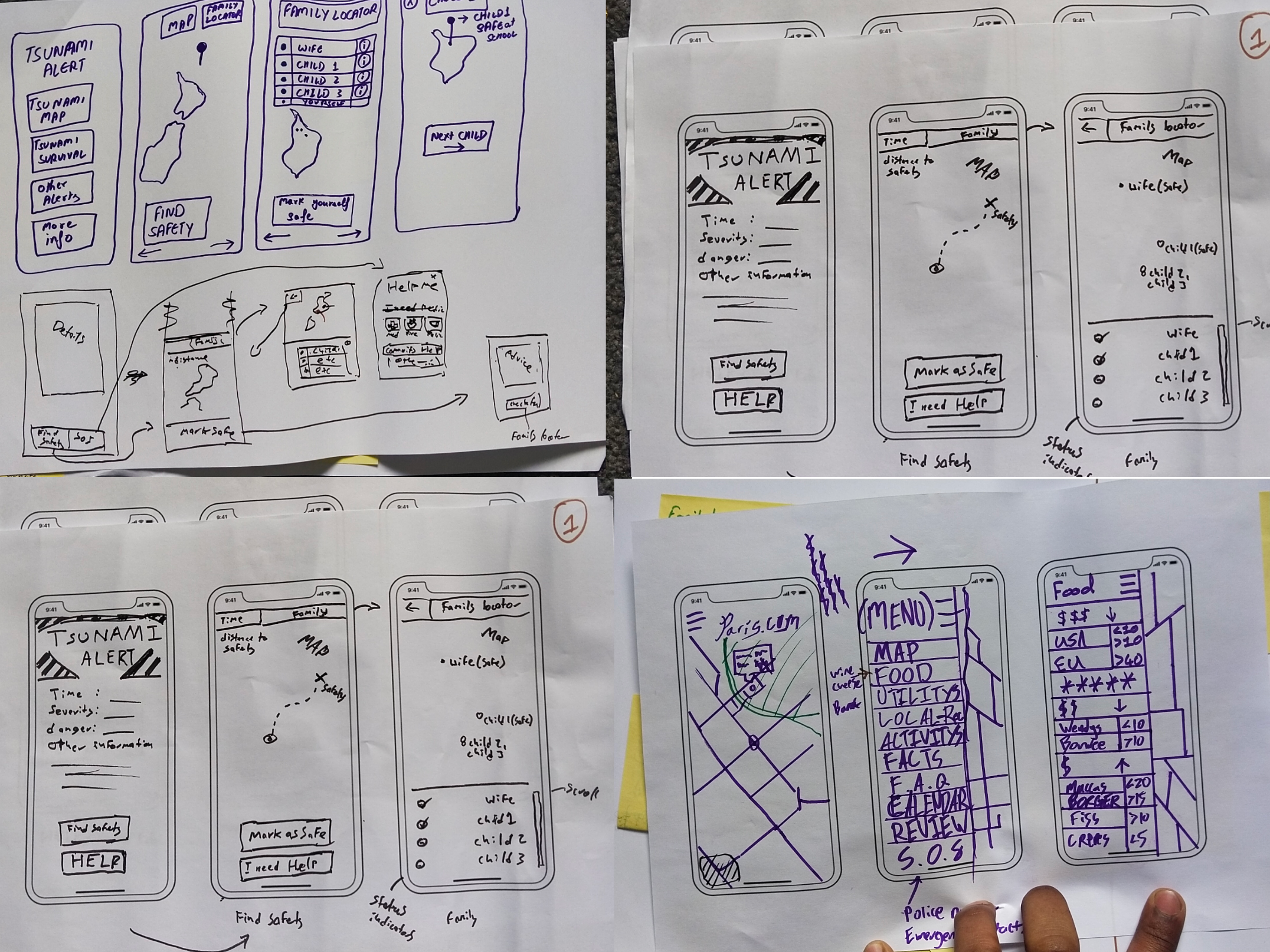

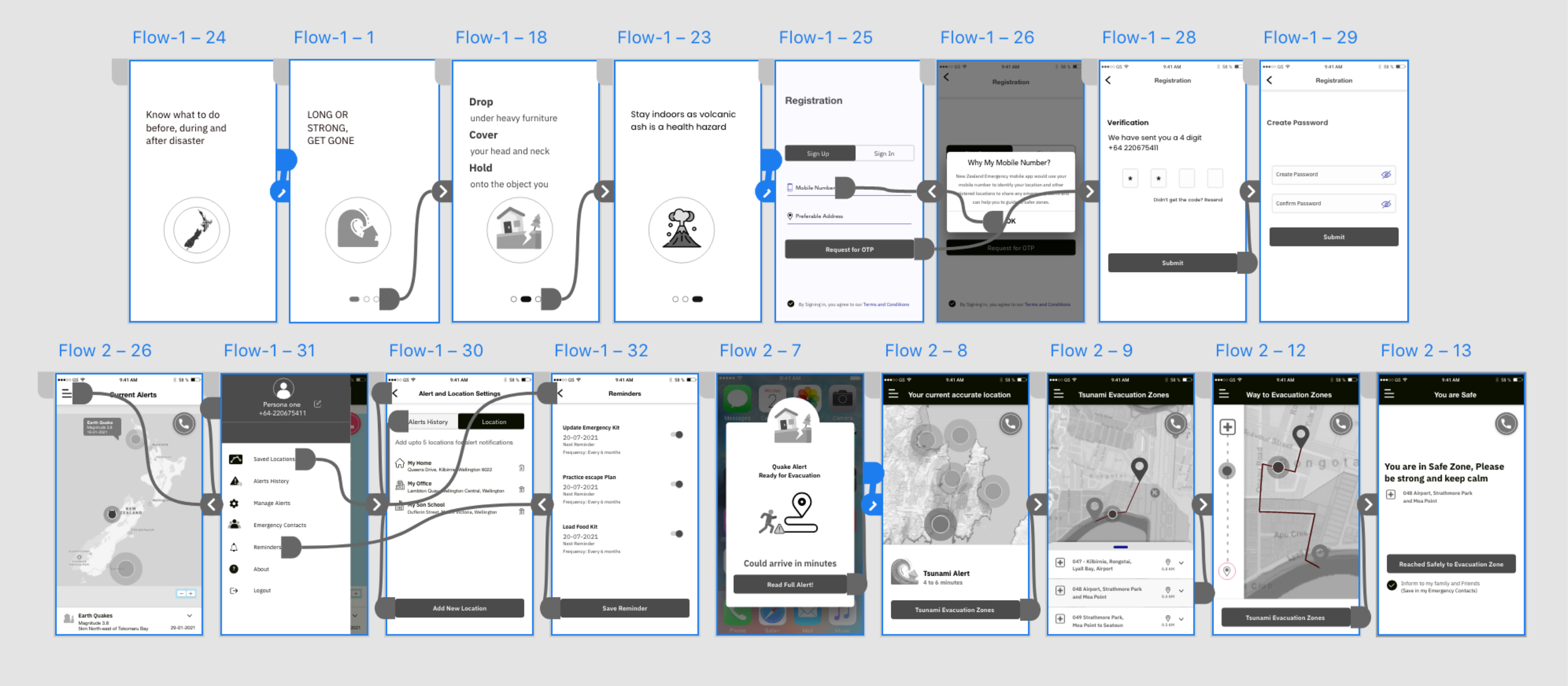

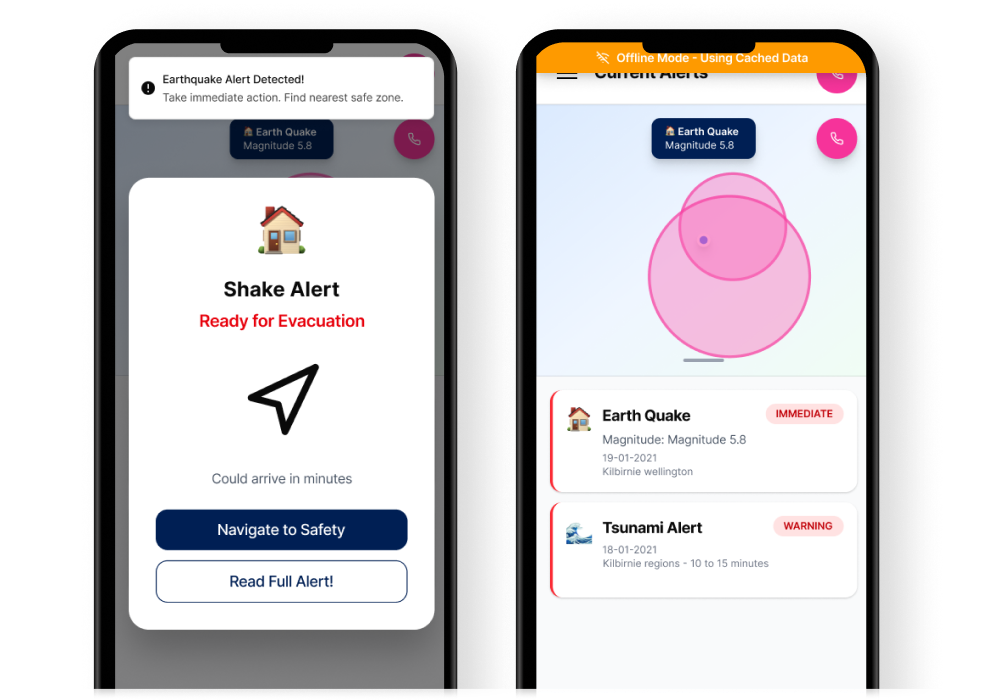

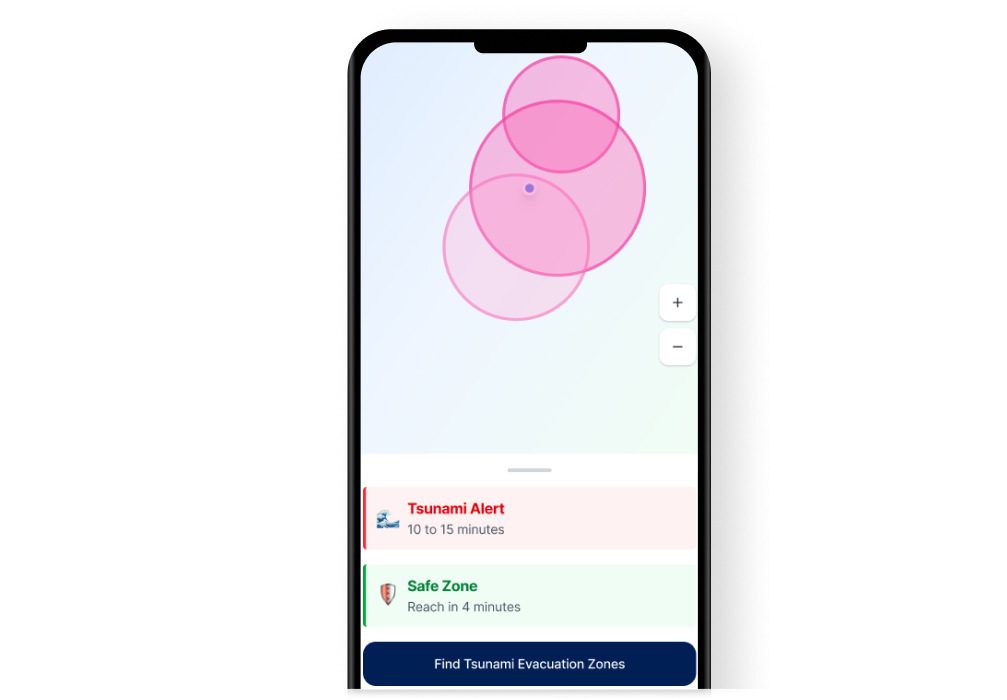

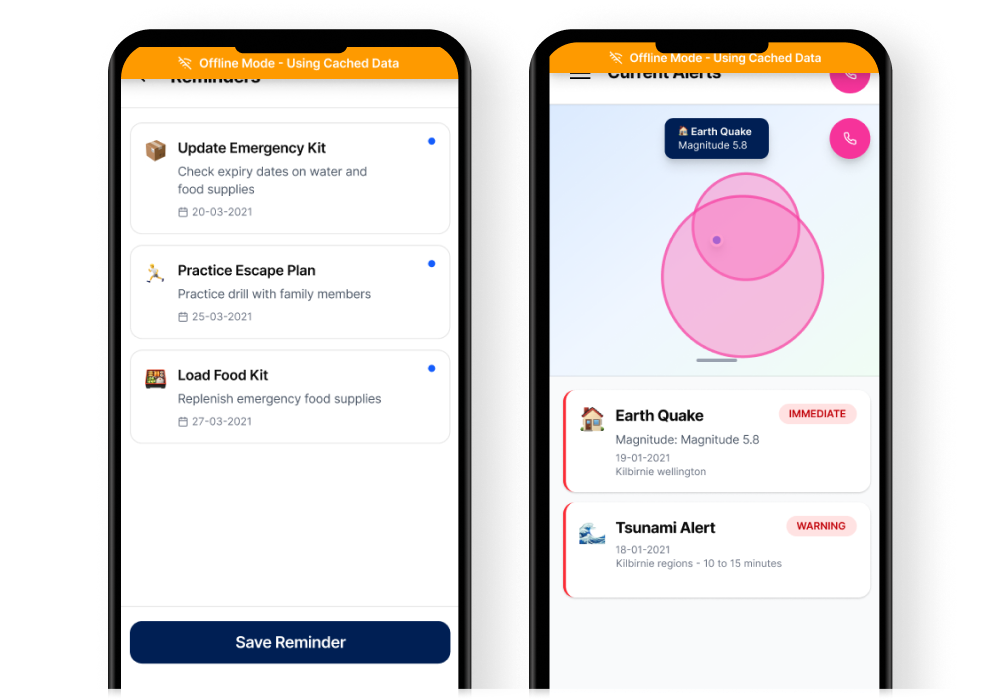

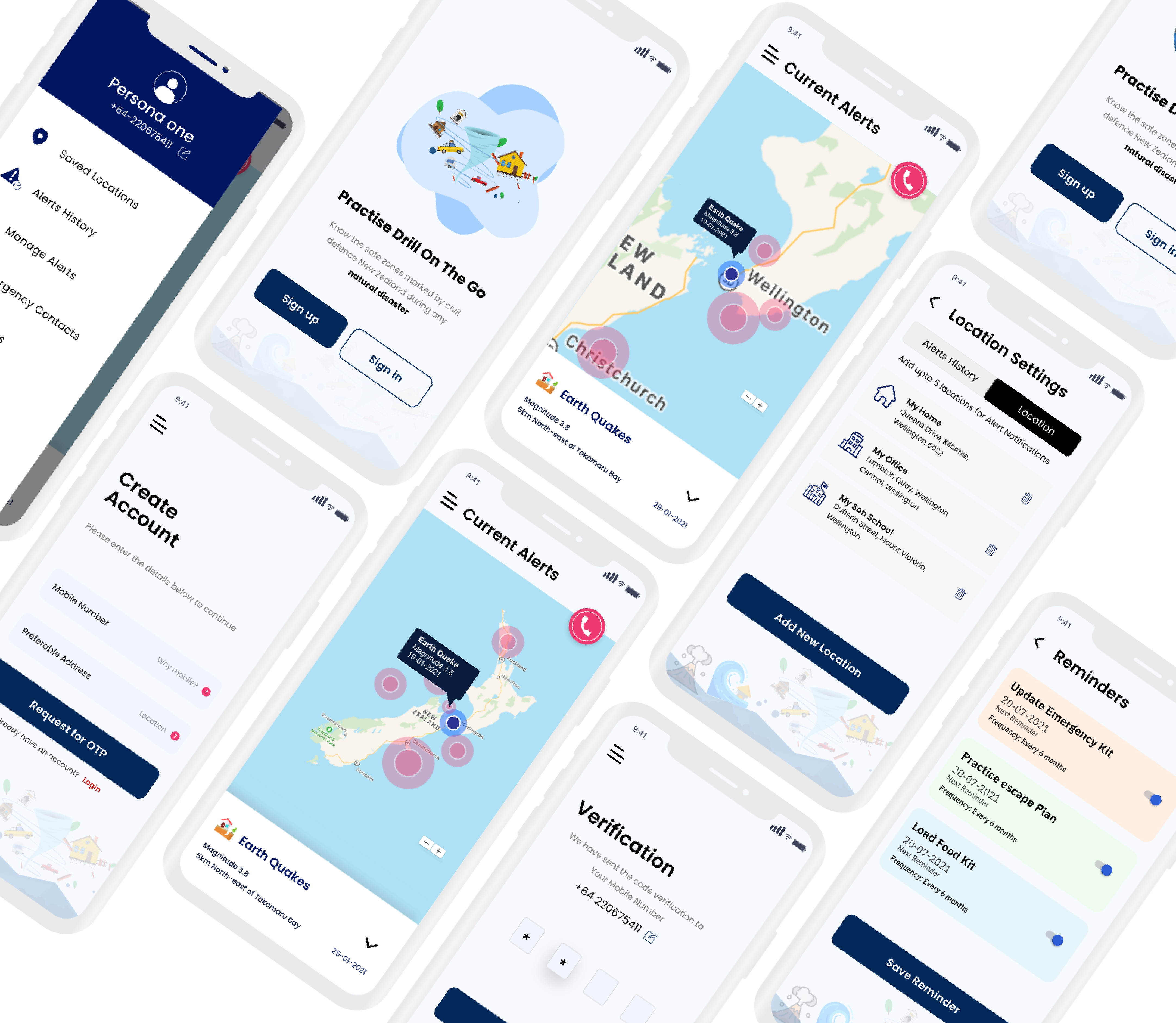

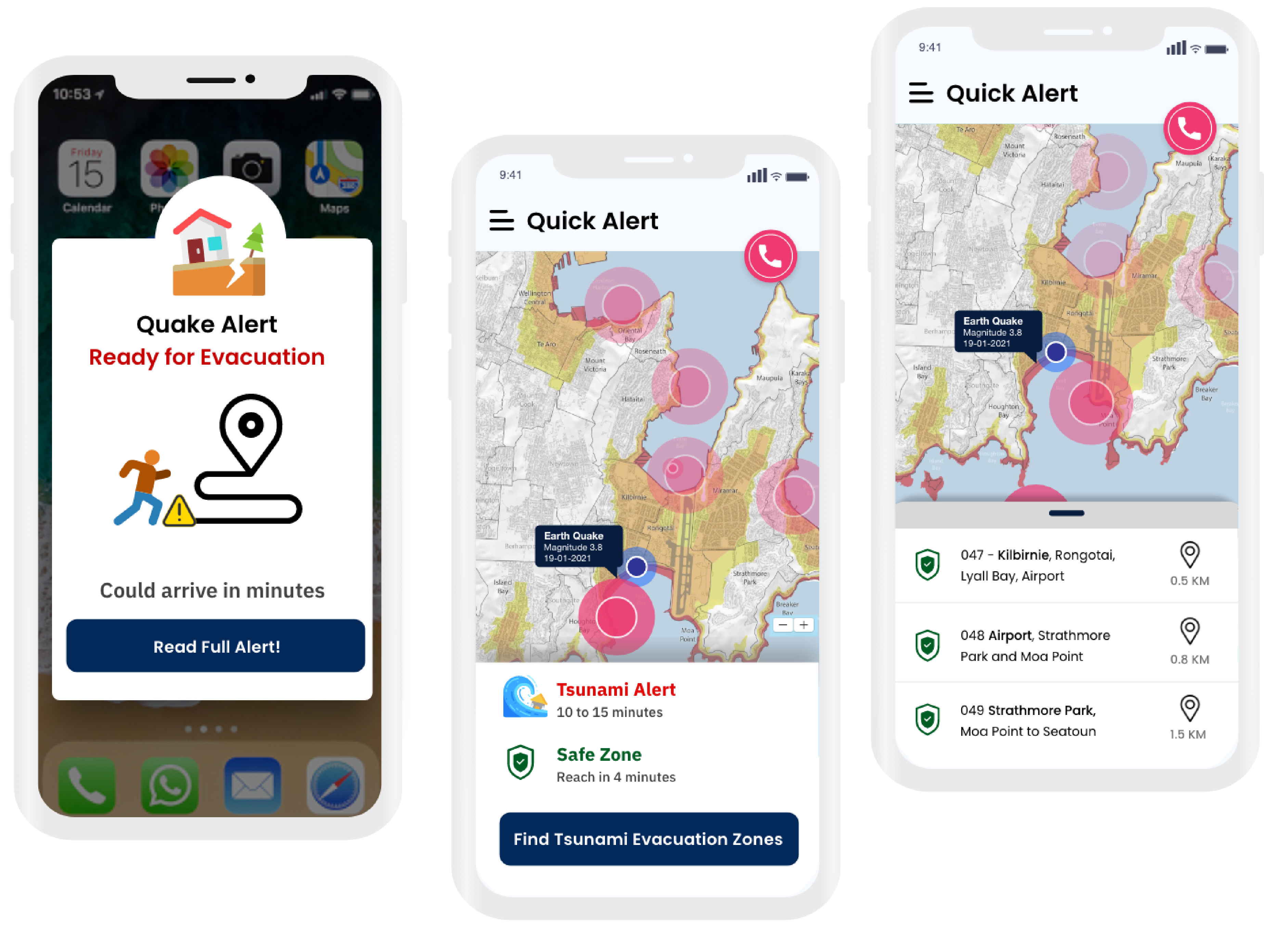

New Zealand sits on the Pacific Ring of Fire. Earthquakes, tsunamis, volcanic eruptions, and floods are not hypothetical they're recurring events. I designed a mobile app that helps people find the safest route to evacuation points, in real-time, when panic is at its peak.

Lead UX/UI Designer

6 months

iOS & Android